Support to numerical simulations through the timely provision of seismological input data

Solid Earth Sciences, Seismology

Research area

The last decade has seen the rise in the quantity of seismological data and subsequently in the use of HPC to ensure their rapid processing. Urgent computing applications have been developed in seismology especially when a timely impact assessment is needed, such as for civil protection purposes. Not only these HPC procedures need to be optimized themselves to work in near real time, but the full workflow has to be reliable, starting from input data retrieval. In seismology, this mean having pre-complied, up-to-date and accessible inventories of metadata, as well as standardized and fast access to seismic data and to information on new events, as soon as they are available.

Project goals

The last decade has seen the rise in the quantity of seismological data and subsequently in the use of HPC to ensure their rapid processing. Urgent computing applications have been developed in seismology especially when a timely impact assessment is needed for civil protection purposes. The goal of our project is the timely provision of the seismological input data that seismological HPC procedures such as numerical simulations need to ensure their reliability and accuracy. This implies having pre-compiled, up-to-date and accessible inventories of metadata, as well as standardized and fast access to seismic data and information on new events, as soon as they are available. This approach was demonstrated by supplying selected HPC users with high-quality input data to use in their applications, in the framework of the TeRABIT project.

Computational approach

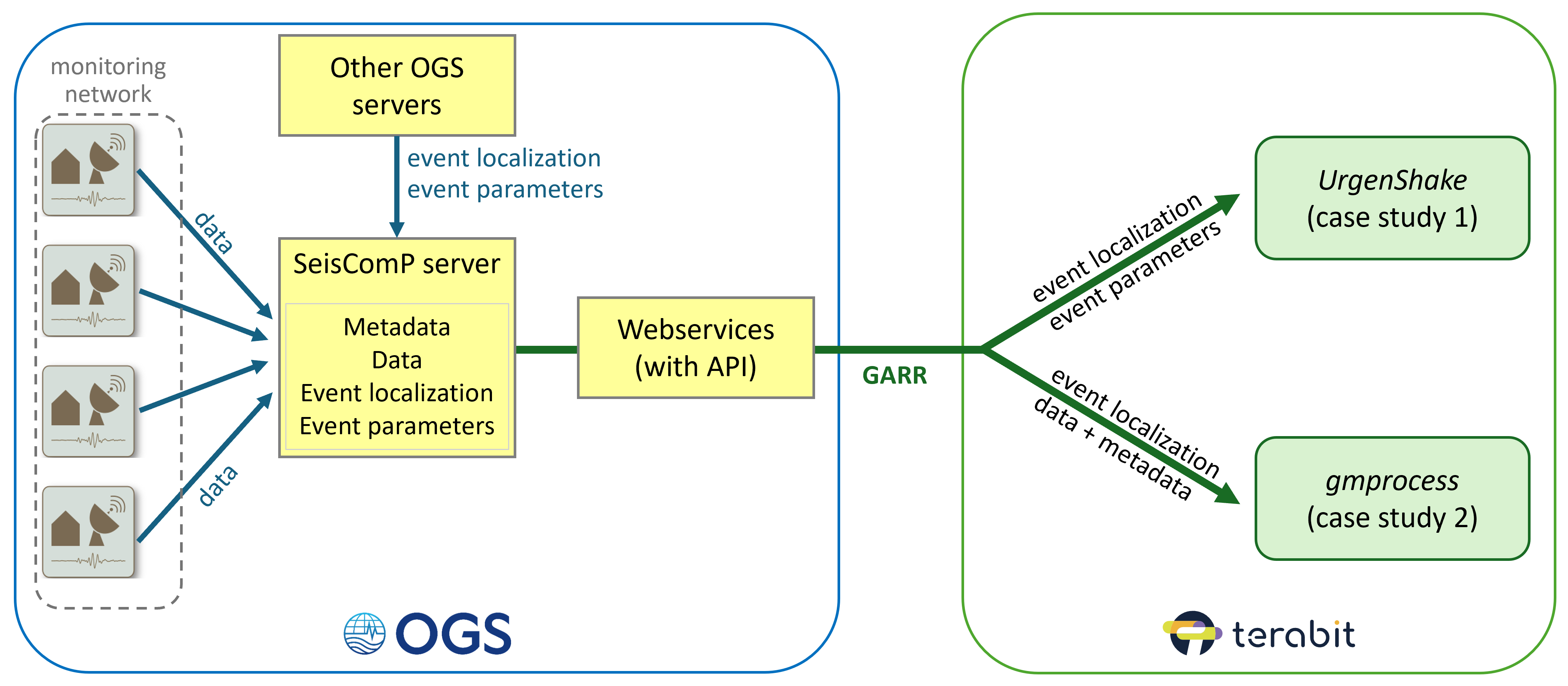

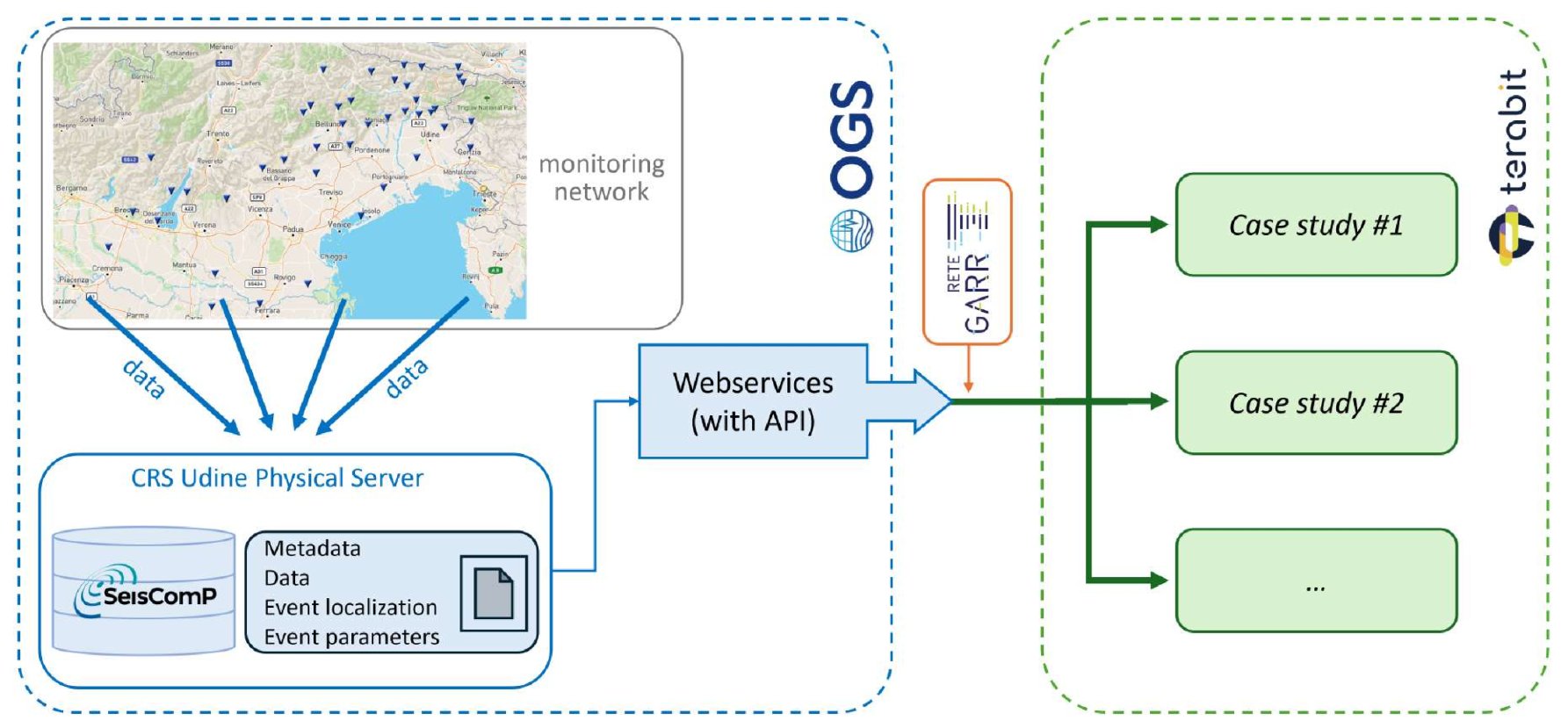

This project makes use of all three of the TeRABIT components; directly in the case of the GARR network, for the actual transmission of the input data, ad indirectly for both Cloud and HPC infrastructures, through the "gmprocess" and "UrgenShake" application examples. The main technological challenge will be the rapid communication between infrastructures, which will have to be tested against the timing requirements of the HPC applications in order to ensure the feasibility of data retrieval within useful time. This implies on one hand to set up the functioning of the authentication over network which ensures access to data from one infrastructure to the other, and on the other hand to test the response time of the webservices themselves.

Key results

The core of the project consisted in the preparation and management of standard seismological data collected by monitoring networks for a target area located in northeastern Italy. This included both organizing and making immediately accessible the archives of seismological data available at OGS for the past 10 years and ensuring the real time acquisition and transmission of newly collected data. To do so, we set up a server using the “SeisComP” seismological software, which natively supports the implementation of standard seismological webservices for the distribution of data, metadata and event information. Their interfaces ensure interoperability between distributed systems over the internet and easy and standardized data access through API. An additional event database was populated with all past earthquake localizations produced by OGS and was set up to be updated with new ones as soon as they are available. Once the base layer of the project was sufficiently developed, we tackled the challenge of communication between separate research infrastructures, the OGS one for the retrieval of data and the TeRABIT one for its use in HPC implementations. We tested the interaction of our service both with another TeRABIT case study, “gmprocess” for near-real time ground motion parameter estimation, and with an independent HPC implementation for machine-learning event localization. The tests lead us to develop a system for authentication over network to the webservices via token.

Resource usage

As the purpose of this project is mainly of support to other TeRABIT use cases, it directly used only the GARR network component of the infrastructure, for the actual transmission of the input data.

What's next

Part of the future work will be devoted to implementing an additional webservice to provide supplementary seismic event parameters which are usually not available in preliminary event analyses. These parameters, like seismic moment and corner frequency, can greatly increase the precision of some numerical simulations such as hazard scenarios calculation. We also plan to make the token authentication system fully operational to continue testing the interaction of the service with other TeRABIT case studies running directly on the TeRABIT infrastructures, such as the “gmprocess” in the near future. Our hope is that the simplified token access will allow to fully exploit the speed and stability of the GARR network. The implementation of the seismic event parameters webservice will also allow to test the interaction with the “urgentshake” TeRABIT case study, which uses them as input for the calculation of rapid hazard scenarios.

Workflow of the project. A physical SeisComP server located at the OGS headquarters handles the collection of data and metadata for the target area in northeastern Italy and exposes them via dedicated webservices, together with event localization (and in the future also event parameters). These services can be interrogated through APIs over the GARR network by different seismological case studies operating inside the TeRABIT framework.

Laura Cataldi

Istituto Nazionale di Oceanografia e di Geofisica Sperimentale

I am a seismologist with a background in physics, currently working as a technologist for OGS. My expertise is in the field of seismological data analysis and modelling, with a focus on the estimation of ground motion parameters and on the modelling of Fourier amplitude spectra. I developed and shared most of the codes used in my work, with a preference for python language and a base knowledge of C. My activities also include data retrieval and management, both in terms of ensuring the functioning of seismic monitoring arrays and of managing dedicated data servers. For this reason I also developed expertise in data and metadata curation and in the setup of webservices, to grant easy and standardized access to high quality data. I support data FAIRness and I am currently in the process of obtaining the FAIR Implementer certificate.